Current Smartphone Virtual Reality solutions fail to engage the user in a truly realistic way. They are mostly static experiences that move around the user as opposed to the user moving through the environment. Additional peripherals such as Google’s Daydream controller try to improve this static experience by adding motion based input to make the user feel more immersed but still do not allow the user move through the environment.

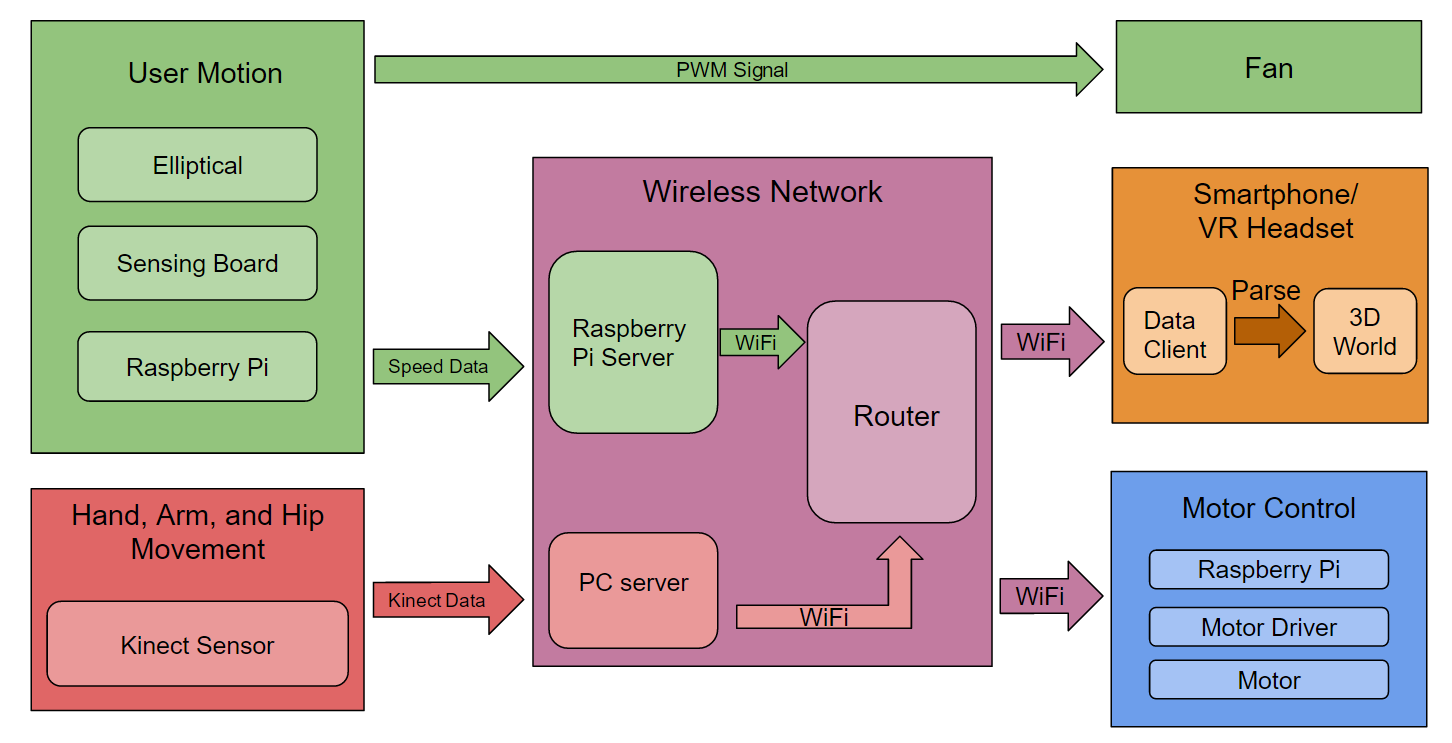

Step is a virtual reality system that will change the way users interact with virtual worlds through enhanced immersion. Unlike most virtual reality systems, the user’s movements will play a role in the virtual environment, as a user’s walking, running, turning and other physical movements will correspond to movements in the virtual world. While making virtual reality more realistic, it will also improve user health and provide a platform for entertainment or training.